Hydra-7@ADC Status

|

Hydra has been moved to the new data center, updates are at the

Data Center Move page. You can view the list of all the available modules: as an HTML document, or a plain ASCII text file. You can also check the bandwidth between SAO and HDC. You can select to have this page refreshed every 5m, 20m, or 1hr, this one will auto-refresh every 20m. |

-

Usage

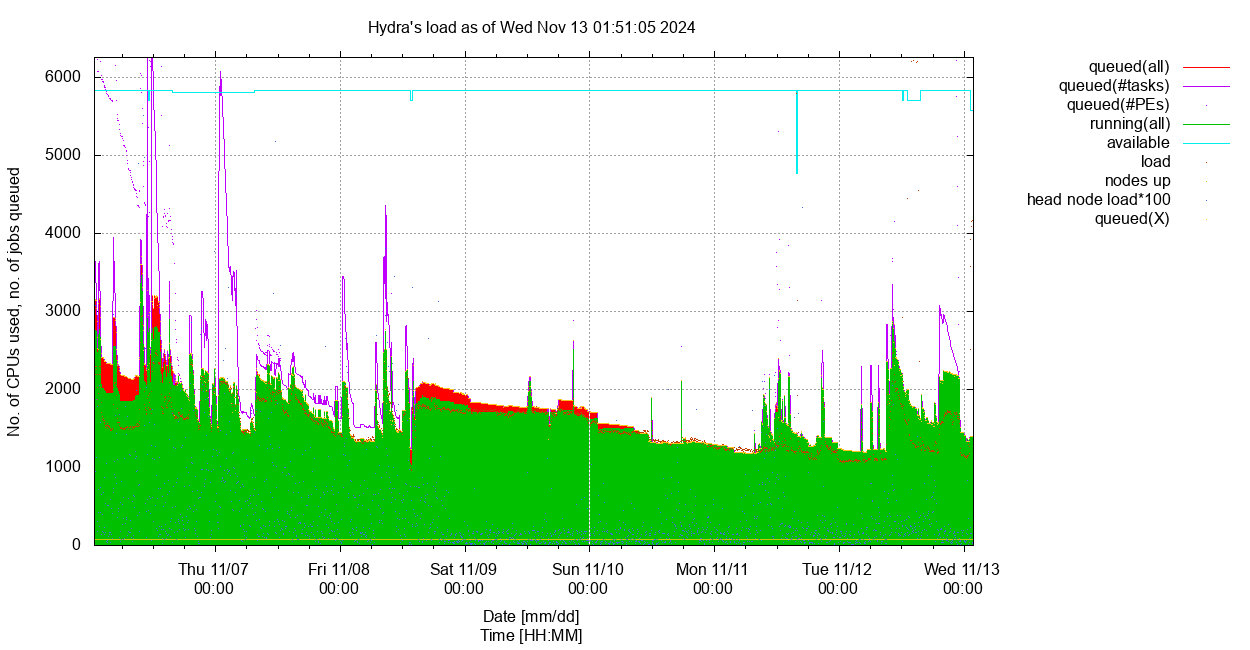

Current snapshot sorted by nodes' . Usage vs time, for length= and user= highlighted.

As of Sat Jan 10 11:17:03 2026: #CPUs/nodes 5868/74, 0 down.

Loads: head node: 0.37, login nodes: 0.49, 0.01, 0.11, 0.23; NSDs: 0.80, 0.07, 0.23, 8.03, 8.28; licenses: none used.

Queues status: none disabled, none need attention, none in error state.

13 users with running jobs (slots/jobs):

breusingc=16/1 corderm=1 guerravc=1 horowitzj=8/1 jbak=540 johnsonsj=10/1 mghahrem=2 nelsonjo=20/1 peresph=120/10 ssanjaripour=32/1 uribeje=8/1 wirshingh=6/1 xuj=2 Current load: 543.7, #running (slots/jobs): 766/563, usage: 13.1%, efficiency: 71.0%

3 users with queued jobs (jobs/tasks/slots):

jbak=2/160/160 mghahrem=2/282/282 ssanjaripour=1/1/32 Total number of queued jobs/tasks/slots: 5/443/474

60 users have/had running or queued jobs over the past 7 days, 67 over the past 15 days. 99 over the past 30 days.

Click on the tabs to view each section, on the plots to view larger versions.

You can view the current cluster snapshot sorted by name, no. cpu, usage, load or memory, and

view the past load for 7, or 15, or 30 days as well as highlight a given user by selecting the corresponding options in the drop down menus.{}

This page was last updated on Saturday, 10-Jan-2026 11:24:20 EST with mk-webpage.pl ver. 7.3/1 (Oct 2025/SGK) in 2:32. -

Warnings

Oversubscribed Jobs

As of Sat Jan 10 11:17:04 EST 2026 (2 oversubscribed jobs, showing no more than 3 per user) Total running (PEs/jobs) = 766/563, 5 queued (jobs), showing only oversubscribed jobs (cpu% > 133% & age > 1h) for all users. jobID name user age nPEs cpu% queue node taskID 11787164 m_2_prithvi_pip xuj +3:04 1 925.8% lTgpu.q 79-02 11787245 m_2_prithvi_pip xuj 10:06 1 231.6% lTgpu.q 79-01 ⇒ Equivalent to 9.6 overused CPUs: 2 CPUs used at 578.7% on average.

Inefficient Jobs

As of Sat Jan 10 11:17:05 EST 2026 (14 inefficient jobs, showing no more than 3 per user) Total running (PEs/jobs) = 766/563, 5 queued (jobs), showing only inefficient jobs (cpu% < 33% & age > 1h) for all users. jobID name user age nPEs cpu% queue node taskID 11796561 ass2 peresph +1:19 12 13.4% mThM.q 76-14 11796562 ass3 peresph +1:19 12 16.7% mThM.q 76-04 11796563 ass4 peresph +1:19 12 10.0% mThM.q 76-11 (more by peresph) 11797065 kuniq johnsonsj 23:58 10 12.0% mThM.q 65-13 11797259 mitobim_loop.jo wirshingh 22:39 6 16.6% mThC.q 64-11 11797485 v uribeje 07:48 8 12.5% uThM.q 75-02 11797509 iqtree_65_outli nelsonjo 01:50 20 4.9% lThC.q 65-02 ⇒ Equivalent to 145.3 underused CPUs: 164 CPUs used at 11.4% on average. To see them all use: 'q+ -ineff -u peresph' (10)

Nodes with Excess Load

As of Sat Jan 10 11:17:08 EST 2026 (4 nodes have a high load, offset=1.5) #slots excess node #CPUs used load load ----------------------------------- 76-02 192 0 2.3 2.3 * 76-03 192 14 18.7 4.7 * 76-04 192 12 13.7 1.7 * 79-02 20 2 7.9 5.9 * Total excess load = 14.5

High Memory Jobs

Statistics

User nSlots memory memory vmem maxvmem ratio Name used reserved used used used [TB] resd/maxvm -------------------------------------------------------------------------------------------------- peresph 120 87.0% 1.1719 57.1% 0.1447 28.4% 0.0283 0.3729 3.1 johnsonsj 10 7.2% 0.4883 23.8% 0.3491 68.5% 0.3781 0.4007 1.2 uribeje 8 5.8% 0.3906 19.0% 0.0158 3.1% 0.0158 0.0158 24.7 ================================================================================================== Total 138 2.0508 0.5096 0.4223 0.7895 2.6

Warnings

12 high memory jobs produced a warning:

1 for johnsonsj 10 for peresph 1 for uribejeDetails for each job can be found here.

-

Breakdown by Queue

Select length:

Current Usage by Queue

Total Limit Fill factor Efficiency sThC.q=0 mThC.q=554 lThC.q=21 uThC.q=0 575 5056 11.4% 92.1% sThM.q=0 mThM.q=146 lThM.q=0 uThM.q=8 154 4680 3.3% 312.4% sTgpu.q=2 mTgpu.q=0 lTgpu.q=2 qgpu.iq=32 36 104 34.6% 32.9% uTxlM.rq=0 0 536 0.0% lThMuVM.tq=0 0 384 0.0% lTb2g.q=0 0 2 0.0% lTIO.sq=0 0 8 0.0% lTWFM.sq=0 0 4 0.0% qrsh.iq=1 1 68 1.5% 0.9% Total: 766

-

Avail Slots/Wait Job(s)

Available Slots

As of Sat Jan 10 11:17:06 EST 2026 4339 avail(slots), free(load)=5113.3, unresd(mem)=37168.9G, for hgrp=@hicpu-hosts and minMem=1.0G/slot total(nCPU) 5120 total(mem) 39.8T unused(slots) 4391 unused(load) 5113.3 ie: 85.8% 99.9% unreserved(mem) 36.3T unused(mem) 38.5T ie: 91.1% 96.6% unreserved(mem) 8.5G unused(mem) 9.0G per unused(slots)

3959 avail(slots), free(load)=4674.5, unresd(mem)=33159.0G, for hgrp=@himem-hosts and minMem=1.0G/slot total(nCPU) 4680 total(mem) 35.8T unused(slots) 4011 unused(load) 4674.5 ie: 85.7% 99.9% unreserved(mem) 32.4T unused(mem) 34.6T ie: 90.5% 96.7% unreserved(mem) 8.3G unused(mem) 8.8G per unused(slots)

536 avail(slots), free(load)=536.0, unresd(mem)=8063.0G, for hgrp=@xlmem-hosts and minMem=1.0G/slot total(nCPU) 536 total(mem) 7.9T unused(slots) 536 unused(load) 536.0 ie: 100.0% 100.0% unreserved(mem) 7.9T unused(mem) 7.8T ie: 100.0% 98.9% unreserved(mem) 15.0G unused(mem) 14.9G per unused(slots)

68 avail(slots), free(load)=103.5, unresd(mem)=744.2G, for hgrp=@gpu-hosts and minMem=1.0G/slot total(nCPU) 104 total(mem) 0.7T unused(slots) 68 unused(load) 103.5 ie: 65.4% 99.5% unreserved(mem) 0.7T unused(mem) 0.6T ie: 98.7% 81.5% unreserved(mem) 10.9G unused(mem) 9.0G per unused(slots)

GPU Usage

Sat Jan 10 11:17:12 EST 2026 hostgroup: @gpu-hosts (3 hosts) - --- memory (GB) ---- - #GPU - --------- slots/CPUs --------- hostname - total used resd - a/u - nCPU used load - free unused compute-50-01 - 503.3 77.0 426.3 - 4/2 - 64 33 2.2 - 31 61.8 compute-79-01 - 125.5 12.4 113.1 - 2/1 - 20 1 1.8 - 19 18.2 compute-79-02 - 125.5 49.9 75.6 - 2/2 - 20 2 7.9 - 18 12.1 Total GPU=8, used=5 (62.5%)

Waiting Job(s)

As of Sat Jan 10 11:17:07 EST 2026 2 jobs waiting for jbak: jobID jobName user age nPEs memReqd queue taskID --------- --------------- ---------------- ------ ---- -------- ------ ------- 11797521 J_20220731T-tb+ jbak 00:10 1 mThC.q 201-280:1 11797522 J_20220731T-tb+ jbak 00:09 1 mThC.q 201-280:1 quota rule resource=value/limit %used ------------------- ------------------------------- ------ max_slots_per_user/1 slots=540/840 64.3% for jbak max_hC_slots_per_user/2 slots=540/840 64.3% for jbak in queue mThC.q max_mem_res_per_user/1 mem_res=1.055T/9.985T 10.6% for jbak in queue uThC.q ------------------- ------------------------------- ------ 2 jobs waiting for mghahrem: jobID jobName user age nPEs memReqd queue taskID --------- --------------- ---------------- ------ ---- -------- ------ ------- 11797337 A10_Map_Creatio mghahrem 21:08 1 sTgpu.q 2-143:1 11797338 A10_Map_Creatio mghahrem 21:07 1 sTgpu.q 4-143:1 quota rule resource=value/limit %used ------------------- ------------------------------- ------ total_gpus_per_user/1 GPUS=2/4 50.0% for mghahrem in queue qgpu.iq max_gpus_per_user/1 GPUS=2/4 50.0% for mghahrem in queue sTgpu.q max_slots_per_user/1 slots=2/840 0.2% for mghahrem ------------------- ------------------------------- ------ 1 job waiting for ssanjaripour: jobID jobName user age nPEs memReqd queue taskID --------- --------------- ---------------- ------ ---- -------- ------ ------- 11797490 jetformer_astro ssanjaripour 04:47 32 lTgpu.q quota rule resource=value/limit %used ------------------- ------------------------------- ------ max_gpus_per_user/4 GPUS=1/1 100.0% for ssanjaripour in queue qgpu.iq max_concurrent_jobs_per_u no_concurrent_jobs=1/1 100.0% for ssanjaripour in queue qgpu.iq total_gpus_per_user/1 GPUS=1/4 25.0% for ssanjaripour in queue qgpu.iq max_slots_per_user/1 slots=32/840 3.8% for ssanjaripour ------------------- ------------------------------- ------

Overall Quota Usage

quota rule resource=value/limit %used ------------------- ------------------------------- ------ total_gpus/1 GPUS=2/8 25.0% for * in queue sTgpu.q total_gpus/1 GPUS=2/8 25.0% for * in queue lTgpu.q total_slots/1 slots=766/5960 12.9% for * blast2GO/1 slots=14/110 12.7% for * total_gpus/1 GPUS=1/8 12.5% for * in queue qgpu.iq total_mem_res/2 mem_res=2.301T/35.78T 6.4% for * in queue uThM.q total_mem_res/1 mem_res=1.225T/39.94T 3.1% for * in queue uThC.q

-

Memory Usage

Reserved Memory, All High-Memory Queues

Select length:

Current Memory Quota Usage

As of Sat Jan 10 11:17:08 EST 2026 quota rule resource=value/limit %used filter --------------------------------------------------------------------------------------------------- total_mem_res/1 mem_res=1.225T/39.94T 3.1% for * in queue uThC.q total_mem_res/2 mem_res=2.301T/35.78T 6.4% for * in queue uThM.q

Current Memory Usage by Compute Node, High Memory Nodes Only

hostgroup: @himem-hosts (54 hosts) - ----------- memory (GB) ------------ - --------- slots/CPUs --------- hostname - avail used resd - unused unresd - nCPU used load - free unused compute-64-17 - 503.5 11.8 0.2 - 491.7 503.3 - 32 0 0.0 - 32 32.0 compute-64-18 - 503.5 12.1 0.2 - 491.4 503.3 - 32 0 0.0 - 32 32.0 compute-65-02 - 503.5 19.6 92.0 - 483.9 411.5 - 64 26 6.3 - 38 57.7 compute-65-03 - 503.5 19.2 134.0 - 484.3 369.5 - 64 19 11.8 - 45 52.2 compute-65-04 - 503.5 17.9 14.0 - 485.6 489.5 - 64 7 6.2 - 57 57.8 compute-65-05 - 503.5 16.5 16.0 - 487.0 487.5 - 64 8 7.1 - 56 56.9 compute-65-06 - 503.5 19.3 134.0 - 484.2 369.5 - 64 19 7.2 - 45 56.8 compute-65-07 - 503.5 18.1 14.0 - 485.4 489.5 - 64 7 6.3 - 57 57.7 compute-65-09 - 503.5 17.8 72.0 - 485.7 431.5 - 64 12 6.5 - 52 57.5 compute-65-10 - 503.5 18.8 14.0 - 484.7 489.5 - 64 7 6.2 - 57 57.8 compute-65-11 - 503.5 19.2 16.0 - 484.3 487.5 - 64 8 7.0 - 56 57.0 compute-65-12 - 503.5 17.3 16.0 - 486.2 487.5 - 64 8 7.0 - 56 57.0 compute-65-13 - 503.5 18.7 502.0 - 484.8 1.5 - 64 11 4.8 - 53 59.2 compute-65-14 - 503.5 16.9 270.0 - 486.6 233.5 - 64 23 6.3 - 41 57.7 compute-65-15 - 503.5 18.3 14.0 - 485.2 489.5 - 64 7 6.2 - 57 57.8 compute-65-16 - 503.5 18.6 16.0 - 484.9 487.5 - 64 8 7.1 - 56 56.9 compute-65-17 - 503.5 20.3 16.0 - 483.2 487.5 - 64 8 7.1 - 56 56.9 compute-65-18 - 503.5 16.3 16.0 - 487.2 487.5 - 64 8 7.0 - 56 57.0 compute-65-19 - 503.5 17.6 14.0 - 485.9 489.5 - 64 7 6.2 - 57 57.8 compute-65-20 - 503.5 18.9 20.0 - 484.6 483.5 - 64 8 7.1 - 56 56.9 compute-65-21 - 503.5 18.2 16.0 - 485.3 487.5 - 64 8 7.0 - 56 57.0 compute-65-22 - 503.5 18.9 16.0 - 484.6 487.5 - 64 8 7.0 - 56 57.0 compute-65-23 - 503.5 18.4 16.0 - 485.1 487.5 - 64 8 7.0 - 56 57.0 compute-65-24 - 503.5 18.9 16.0 - 484.6 487.5 - 64 8 7.1 - 56 56.9 compute-65-25 - 503.5 19.4 16.0 - 484.1 487.5 - 64 8 7.0 - 56 57.0 compute-65-26 - 503.5 17.0 16.0 - 486.5 487.5 - 64 8 7.0 - 56 57.0 compute-65-27 - 503.5 18.9 16.0 - 484.6 487.5 - 64 8 7.0 - 56 57.0 compute-65-28 - 503.5 19.4 16.0 - 484.1 487.5 - 64 8 7.0 - 56 57.0 compute-65-29 - 503.5 19.5 16.0 - 484.0 487.5 - 64 8 7.0 - 56 57.0 compute-65-30 - 503.5 17.8 16.0 - 485.7 487.5 - 64 8 7.0 - 56 57.0 compute-75-01 - 1007.5 26.2 32.1 - 981.3 975.4 - 128 16 13.9 - 112 114.1 compute-75-02 - 1007.5 25.4 432.0 - 982.1 575.5 - 128 24 14.8 - 104 113.2 compute-75-03 - 755.5 25.6 32.0 - 729.9 723.5 - 128 16 13.9 - 112 114.1 compute-75-04 - 755.0 26.2 29.5 - 728.8 725.5 - 128 15 13.2 - 113 114.8 compute-75-05 - 755.5 26.1 32.0 - 729.4 723.5 - 128 16 14.0 - 112 114.0 compute-75-06 - 755.5 25.7 120.0 - 729.8 635.5 - 128 12 10.1 - 116 117.9 compute-75-07 - 755.5 25.9 32.0 - 729.6 723.5 - 128 16 14.0 - 112 114.0 compute-76-03 - 1007.4 27.0 28.5 - 980.4 978.9 - 128 14 12.4 - 114 115.5 compute-76-04 - 1007.4 19.2 120.0 - 988.2 887.4 - 128 12 9.2 - 116 118.8 compute-76-05 - 1007.4 26.6 30.0 - 980.8 977.4 - 128 15 13.3 - 113 114.7 compute-76-06 - 1007.4 26.8 148.0 - 980.6 859.4 - 128 26 13.3 - 102 114.7 compute-76-07 - 1007.4 22.2 148.0 - 985.2 859.4 - 128 26 13.3 - 102 114.7 compute-76-08 - 1007.4 25.1 120.0 - 982.3 887.4 - 128 12 11.9 - 116 116.1 compute-76-09 - 1007.4 27.3 148.0 - 980.1 859.4 - 128 26 13.4 - 102 114.6 compute-76-10 - 1007.4 28.5 30.0 - 978.9 977.4 - 128 15 13.2 - 113 114.8 compute-76-11 - 1007.4 27.6 148.0 - 979.8 859.4 - 128 26 13.4 - 102 114.6 compute-76-12 - 1007.4 26.8 30.0 - 980.6 977.4 - 128 15 13.3 - 113 114.7 compute-76-13 - 1007.4 26.7 30.0 - 980.7 977.4 - 128 15 13.3 - 113 114.7 compute-76-14 - 1007.4 22.5 134.0 - 984.9 873.4 - 128 19 10.5 - 109 117.5 compute-84-01 - 881.1 104.2 28.0 - 776.9 853.1 - 112 14 12.2 - 98 99.8 compute-93-01 - 503.8 18.8 16.0 - 485.0 487.8 - 64 8 7.1 - 56 56.9 compute-93-02 - 755.6 21.4 20.0 - 734.2 735.6 - 72 10 8.7 - 62 63.3 compute-93-03 - 755.6 21.3 20.0 - 734.3 735.6 - 72 10 8.7 - 62 63.3 compute-93-04 - 755.6 20.3 20.0 - 735.3 735.6 - 72 10 8.8 - 62 63.2 ======= ===== ====== ==== ==== ===== Totals 36637.5 1213.0 3478.5 4680 669 483.3 ==> 3.3% 9.5% ==> 14.3% 10.3% Most unreserved/unused memory (978.9/980.4GB) is on compute-76-03 with 114/115.5 slots/CPUs free/unused. hostgroup: @xlmem-hosts (4 hosts) - ----------- memory (GB) ------------ - --------- slots/CPUs --------- hostname - avail used resd - unused unresd - nCPU used load - free unused compute-76-01 - 1511.4 16.8 -0.0 - 1494.6 1511.4 - 192 0 0.1 - 192 191.9 compute-76-02 - 1511.4 35.8 -0.0 - 1475.6 1511.4 - 192 0 2.2 - 192 189.8 compute-93-05 - 2016.3 17.6 0.0 - 1998.7 2016.3 - 96 0 0.0 - 96 96.0 compute-93-06 - 3023.9 14.5 0.0 - 3009.4 3023.9 - 56 0 0.1 - 56 55.9 ======= ===== ====== ==== ==== ===== Totals 8063.0 84.7 0.0 536 0 2.4 ==> 1.1% 0.0% ==> 0.0% 0.4% Most unreserved/unused memory (3023.9/3009.4GB) is on compute-93-06 with 56/55.9 slots/CPUs free/unused.

Past Memory Usage vs Memory Reservation

Past memory use in hi-mem queues between 12/31/25 and 01/07/26 queues: ?ThM.q ----------- total --------- -------------------- mean -------------------- user no. of elapsed time eff. reserved maxvmem average ratio name jobs/slots [d] [%] [GB] [GB] [GB] resd/maxvmem --------------- -------------- ------------ ----- --------- -------- --------- ------------ kweskinm 1/8 0.00 10.1 400.0 0.5 0.0 840.8 > 2.5 nelsonjo 4/46 0.01 82.4 419.9 10.1 2.1 41.5 > 2.5 pcristof 2/2 0.02 83.8 300.0 0.4 0.3 700.0 > 2.5 nevesk 138/1656 0.06 74.5 600.0 10.7 5.9 56.0 > 2.5 hchong 1/1 0.11 89.5 200.0 86.2 58.8 2.3 gouldingt 1/8 0.14 73.5 96.0 39.8 28.1 2.4 uribeje 65/574 0.35 127.4 192.4 14.8 10.5 13.0 > 2.5 granquistm 34/204 0.36 17.9 150.0 35.2 0.3 4.3 > 2.5 szieba 29/1160 0.50 33.4 0.0 903.1 7.9 0.0 santosbe 7/174 0.62 86.6 192.5 85.9 2.8 2.2 johnsonsj 4/40 0.68 21.9 0.0 408.4 338.6 0.0 mghahrem 9/51 1.04 51.2 418.4 224.3 33.4 1.9 niez 12/192 1.15 71.3 160.0 329.6 1.3 0.5 palmerem 46/46 2.25 99.7 298.6 3.7 2.9 80.6 > 2.5 stlaurentr 150/1280 2.95 76.2 127.6 47.3 15.5 2.7 > 2.5 morrisseyd 3033/3033 3.38 77.2 16.0 1.4 0.6 11.7 > 2.5 medeirosi 155/1240 3.50 64.3 268.2 141.3 0.1 1.9 franzena 39/430 3.61 80.2 161.6 92.9 33.7 1.7 breusingc 70/1090 4.85 10.4 243.7 44.8 0.1 5.4 > 2.5 woodh 283/1132 41.62 89.0 100.0 43.4 17.3 2.3 vohsens 403/6448 72.65 83.1 256.0 101.8 6.3 2.5 > 2.5 pappalardop 564/564 87.20 99.8 300.0 3.4 2.8 87.1 > 2.5 --------------- -------------- ------------ ----- --------- -------- --------- ------------ all 5050/19379 227.05 88.1 236.4 53.4 8.3 4.4 > 2.5 --- queues: ?TxlM.rq ----------- total --------- -------------------- mean -------------------- user no. of elapsed time eff. reserved maxvmem average ratio name jobs/slots [d] [%] [GB] [GB] [GB] resd/maxvmem --------------- -------------- ------------ ----- --------- -------- --------- ------------ --------------- -------------- ------------ ----- --------- -------- --------- ------------ all 0/0 0.00

-

Resource Limits

Limit slots for all users together users * to slots=5960 users * queues sThC.q,lThC.q,mThC.q,uThC.q to slots=5176 users * queues sThM.q,mThM.q,lThM.q,uThM.q to slots=4680 users * queues uTxlM.rq to slots=536 users * queues sTgpu.q,mTgpu.q,lTgpu.q to slots=104 Limit slots/user for xlMem restricted queue users {*} queues {uTxlM.rq} to slots=536 Limit total reserved memory for all users per queue type users * queues sThC.q,mThC.q,lThC.q,uThC.q to mem_res=40902G users * queues sThM.q,mThM.q,lThM.q,uThM.q to mem_res=36637G users * queues uTxlM.rq to mem_res=8063G Limit slots/user for interactive (qrsh) queues users {*} queues {qrsh.iq} to slots=16 Limit GPUs for all users in GPU queues to the avail no of GPUs users * queues {sTgpu.q,mTgpu.q,lTgpu.q,qgpu.iq} to GPUS=8 Limit GPUs per user in all the GPU queues users {*} queues sTgpu.q,mTgpu.q,lTgpu.q,qgpu.iq to GPUS=4 Limit GPUs per user in each GPU queues users {*} queues {sTgpu.q} to GPUS=4 users {*} queues {mTgpu.q} to GPUS=3 users {*} queues {lTgpu.q} to GPUS=2 users {*} queues {qgpu.iq} to GPUS=1 Limit to set aside a slot for blast2GO users * queues !lTb2g.q hosts {@b2g-hosts} to slots=110 users * queues lTb2g.q hosts {@b2g-hosts} to slots=1 users {*} queues lTb2g.q hosts {@b2g-hosts} to slots=1 Limit total bigtmp concurrent request per user users {*} to big_tmp=25 Limit total number of idl licenses per user users {*} to idlrt_license=102 Limit slots for io queue per user users {*} queues {lTIO.sq} to slots=8 Limit slots for io queue per user users {*} queues {lTWFM.sq} to slots=2 Limit the number of concurrent jobs per user for some queues users {*} queues {uTxlM.rq} to no_concurrent_jobs=3 users {*} queues {lTIO.sq} to no_concurrent_jobs=2 users {*} queues {lWFM.sq} to no_concurrent_jobs=1 users {*} queues {qrsh.iq} to no_concurrent_jobs=4 users {*} queues {qgpu.iq} to no_concurrent_jobs=1 Limit slots/user in hiCPU queues users {*} queues {sThC.q} to slots=840 users {*} queues {mThC.q} to slots=840 users {*} queues {lThC.q} to slots=431 users {*} queues {uThC.q} to slots=143 Limit slots/user for hiMem queues users {*} queues {sThM.q} to slots=840 users {*} queues {mThM.q} to slots=585 users {*} queues {lThM.q} to slots=390 users {*} queues {uThM.q} to slots=73 Limit reserved memory per user for specific queues users {*} queues sThC.q,mThC.q,lThC.q,uThC.q to mem_res=10225G users {*} queues sThM.q,mThM.q,lThM.q,uThM.q to mem_res=9159G users {*} queues uTxlM.rq to mem_res=8063G Limit slots/user for all queues users {*} to slots=840

-

Disk Usage & Quota

As of Sat Jan 10 11:06:02 EST 2026

Disk Usage

Filesystem Size Used Avail Capacity Mounted on netapp-fas83:/vol_home 22.36T 16.84T 5.52T 76%/12% /home netapp-fas83-n02:/vol_data_public 332.50T 44.35T 288.15T 14%/2% /data/public gpfs02:public 800.00T 458.55T 341.45T 58%/30% /scratch/public gpfs02:nmnh_bradys 25.00T 18.61T 6.39T 75%/58% /scratch/bradys gpfs02:nmnh_kistlerl 120.00T 98.58T 21.42T 83%/14% /scratch/kistlerl gpfs02:nmnh_meyerc 25.00T 18.85T 6.15T 76%/7% /scratch/meyerc gpfs02:nmnh_corals 60.00T 49.37T 10.63T 83%/23% /scratch/nmnh_corals gpfs02:nmnh_ggi 130.00T 36.46T 93.54T 29%/15% /scratch/nmnh_ggi gpfs02:nmnh_lab 25.00T 11.45T 13.55T 46%/11% /scratch/nmnh_lab gpfs02:nmnh_mammals 35.00T 27.29T 7.71T 78%/39% /scratch/nmnh_mammals gpfs02:nmnh_mdbc 60.00T 52.47T 7.53T 88%/25% /scratch/nmnh_mdbc gpfs02:nmnh_ocean_dna 90.00T 54.99T 35.01T 62%/2% /scratch/nmnh_ocean_dna gpfs02:nzp_ccg 45.00T 30.90T 14.10T 69%/3% /scratch/nzp_ccg gpfs01:ocio_dpo 10.00T 2.89T 7.11T 29%/1% /scratch/ocio_dpo gpfs01:ocio_ids 5.00T 0.00G 5.00T 0%/1% /scratch/ocio_ids gpfs02:pool_kozakk 12.00T 10.67T 1.33T 89%/2% /scratch/pool_kozakk gpfs02:pool_sao_access 50.00T 4.79T 45.21T 10%/9% /scratch/pool_sao_access gpfs02:pool_sao_rtdc 20.00T 908.33G 19.11T 5%/1% /scratch/pool_sao_rtdc gpfs02:sao_atmos 350.00T 219.77T 130.23T 63%/10% /scratch/sao_atmos gpfs02:sao_cga 25.00T 9.44T 15.56T 38%/28% /scratch/sao_cga gpfs02:sao_tess 50.00T 23.25T 26.75T 47%/83% /scratch/sao_tess gpfs02:scbi_gis 95.00T 60.94T 34.06T 65%/14% /scratch/scbi_gis gpfs02:nmnh_schultzt 35.00T 20.67T 14.33T 60%/75% /scratch/schultzt gpfs02:serc_cdelab 15.00T 12.03T 2.97T 81%/19% /scratch/serc_cdelab gpfs02:stri_ap 25.00T 18.96T 6.04T 76%/1% /scratch/stri_ap gpfs01:sao_sylvain 145.00T 87.06T 57.94T 61%/60% /scratch/sylvain gpfs02:usda_sel 25.00T 8.44T 16.56T 34%/30% /scratch/usda_sel gpfs02:wrbu 50.00T 40.70T 9.30T 82%/14% /scratch/wrbu nas1:/mnt/pool/public 175.00T 101.61T 73.39T 59%/1% /store/public nas1:/mnt/pool/nmnh_bradys 40.00T 14.58T 25.42T 37%/1% /store/bradys nas2:/mnt/pool/n1p3/nmnh_ggi 90.00T 36.28T 53.72T 41%/1% /store/nmnh_ggi nas2:/mnt/pool/nmnh_lab 40.00T 17.56T 22.44T 44%/1% /store/nmnh_lab nas2:/mnt/pool/nmnh_ocean_dna 70.00T 28.41T 41.59T 41%/1% /store/nmnh_ocean_dna nas1:/mnt/pool/nzp_ccg 265.00T 115.77T 149.23T 44%/1% /store/nzp_ccg nas2:/mnt/pool/nzp_cec 40.00T 20.50T 19.50T 52%/1% /store/nzp_cec nas2:/mnt/pool/n1p2/ocio_dpo 50.00T 3.07T 46.93T 7%/1% /store/ocio_dpo nas2:/mnt/pool/n1p1/sao_atmos 750.00T 392.87T 357.13T 53%/1% /store/sao_atmos nas2:/mnt/pool/n1p2/nmnh_schultzt 80.00T 24.96T 55.04T 32%/1% /store/schultzt nas1:/mnt/pool/sao_sylvain 50.00T 9.42T 40.58T 19%/1% /store/sylvain nas1:/mnt/pool/wrbu 80.00T 10.02T 69.98T 13%/1% /store/wrbu nas1:/mnt/pool/admin 20.00T 8.01T 11.99T 41%/1% /store/admin

You can view plots of disk use vs time, for the past 7, 30, or 120 days; as well as plots of disk usage by user, or by device (for the past 90 or 240 days respectively).Notes

Capacity shows % disk space full and % of inodes used.

When too many small files are written on a disk, the file system can become full because it is unable to keep track of new files.

The % of inodes should be lower or comparable to the % of disk space used.

If it is much larger, the disk can become unusable before it gets full.

Disk Quota Report

Volume=NetApp:vol_data_public, mounted as /data/public -- disk -- -- #files -- default quota: 4.50TB/10.0M Disk usage %quota usage %quota name, affiliation - username (indiv. quota) -------------------- ------- ------ ------ ------ ------------------------------------------- /data/public 4.13TB 91.8% 5.07M 50.7% Alicia Talavera, NMNH - talaveraa /data/public 3.98TB 88.4% 0.00M 0.0% Zelong Nie, NMNH - niez Volume=NetApp:vol_home, mounted as /home -- disk -- -- #files -- default quota: 384.0GB/10.0M Disk usage %quota usage %quota name, affiliation - username (indiv. quota) -------------------- ------- ------ ------ ------ ------------------------------------------- /home 363.9GB 94.8% 0.28M 2.8% Juan Uribe, NMNH - uribeje /home 359.8GB 93.7% 2.84M 28.4% Brian Bourke, WRBU - bourkeb /home 359.2GB 93.5% 2.10M 21.0% Michael Trizna, NMNH/BOL - triznam /home 331.6GB 86.4% 0.27M 2.7% Paul Cristofari, SAO/SSP - pcristof /home 328.1GB 85.4% 0.00M 0.0% Allan Cabrero, NMNH - cabreroa Volume=GPFS:scratch_public, mounted as /scratch/public -- disk -- -- #files -- default quota: 15.00TB/39.8M Disk usage %quota usage %quota name, affiliation - username (indiv. quota) -------------------- ------- ------ ------ ------ ------------------------------------------- /scratch/public 17.20TB 114.7% 3.02M 0.0% *** Ting Wang, NMNH - wangt2 /scratch/public 15.20TB 101.3% 1.56M 0.0% *** Juan Uribe, NMNH - uribeje /scratch/public 15.00TB 100.0% 0.00M 0.0% *** Rebeka Tamasi Bottger, SAO/OIR - rbottger /scratch/public 14.20TB 94.7% 4.24M 0.0% Kevin Mulder, NZP - mulderk /scratch/public 14.10TB 94.0% 0.08M 0.0% Samuel Vohsen, NMNH - vohsens /scratch/public 14.00TB 93.3% 35.12M 0.0% Alberto Coello Garrido, NMNH - coellogarridoa /scratch/public 14.00TB 93.3% 0.08M 0.2% Qindan Zhu, SAO/AMP - qzhu /scratch/public 13.80TB 92.0% 27.71M 0.0% Zelong Nie, NMNH - niez /scratch/public 13.50TB 90.0% 2.09M 0.0% Solomon Chak, SERC - chaks Volume=GPFS:scratch_stri_ap, mounted as /scratch/stri_ap -- disk -- -- #files -- default quota: 5.00TB/12.6M Disk usage %quota usage %quota name, affiliation - username (indiv. quota) -------------------- ------- ------ ------ ------ ------------------------------------------- /scratch/stri_ap 14.60TB 292.0% 0.05M 0.0% *** Carlos Arias, STRI - ariasc Volume=NAS:store_public, mounted as /store/public -- disk -- -- #files -- default quota: 0.0MB/0.0M Disk usage %quota usage %quota name, affiliation - username (indiv. quota) -------------------- ------- ------ ------ ------ ------------------------------------------- /store/public 4.80TB 96.1% - - *** Madeline Bursell, OCIO - bursellm (5.0TB/0M) /store/public 4.71TB 94.2% - - Zelong Nie, NMNH - niez (5.0TB/0M) /store/public 4.51TB 90.1% - - Alicia Talavera, NMNH - talaveraa (5.0TB/0M) /store/public 4.39TB 87.8% - - Mirian Tsuchiya, NMNH/Botany - tsuchiyam (5.0TB/0M)

SSD Usage

Node -------------------------- /ssd ------------------------------- Name Size Used Avail Use% | Resd Avail Resd% | Resd/Used 64-17 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 64-18 3.49T 24.6G 3.47T 0.7% | 0.0G 3.49T 0.0% | 0.00 65-02 3.49T 24.6G 3.47T 0.7% | 0.0G 3.49T 0.0% | 0.00 65-03 3.49T 24.6G 3.47T 0.7% | 0.0G 3.49T 0.0% | 0.00 65-04 3.49T 24.6G 3.47T 0.7% | 0.0G 3.49T 0.0% | 0.00 65-05 3.49T 24.6G 3.47T 0.7% | 0.0G 3.49T 0.0% | 0.00 65-06 3.49T 24.6G 3.47T 0.7% | 0.0G 3.49T 0.0% | 0.00 65-10 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-11 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-12 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-13 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-14 1.75T 20.5G 1.73T 1.1% | 199.7G 1.55T 11.2% | 9.75 65-15 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-16 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-17 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-18 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-19 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-20 1.75T 12.3G 1.73T 0.7% | 1.75T 0.0G 100.0% | 145.42 65-21 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-22 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-23 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-24 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-25 1.75T 12.3G 1.73T 0.7% | 1.75T 0.0G 100.0% | 145.42 65-26 1.75T 12.3G 1.73T 0.7% | 1.75T 0.0G 100.0% | 145.42 65-27 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-28 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 65-29 1.75T 402.4G 1.35T 22.5% | 0.0G 1.75T 0.0% | 0.00 65-30 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 75-02 6.98T 50.2G 6.93T 0.7% | 0.0G 6.98T 0.0% | 0.00 75-03 6.98T 50.2G 6.93T 0.7% | 0.0G 6.98T 0.0% | 0.00 75-05 6.98T 50.2G 6.93T 0.7% | 0.0G 6.98T 0.0% | 0.00 76-03 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 76-04 1.75T 12.3G 1.73T 0.7% | 0.0G 1.75T 0.0% | 0.00 76-05 1.75T 12.3G 1.73T 0.7% | 1.75T 0.0G 100.0% | 145.42 76-06 1.75T 12.3G 1.73T 0.7% | 1.75T 0.0G 100.0% | 145.42 76-13 1.75T 101.4G 1.65T 5.7% | 0.0G 1.75T 0.0% | 0.00 79-01 7.28T 51.2G 7.22T 0.7% | 0.0G 7.28T 0.0% | 0.00 79-02 7.28T 51.2G 7.22T 0.7% | 0.0G 7.28T 0.0% | 0.00 --------------------------------------------------------------- Total 103.6T 1.19T 102.4T 1.2% | 8.92T 94.63T 8.6% | 7.49

Note: the disk usage and the quota report are compiled 4x/day, the SSD usage is updated every 10m.